I keep hearing the same argument from skeptics. AI readiness assessments are just another consulting exercise. They produce a score, a slide deck, and then nothing changes. I get the frustration. The market is full of generic questionnaires that tell you what you already know.

But dismissing the concept because the execution is often bad is the wrong move.

The real question is not whether an AI readiness assessment has value. The question is whether yours measures what actually matters. And for most organizations, the answer is no, it does not.

The Case Against AI Readiness Assessments, and Where It Falls Apart

Critics make a fair point when they say many assessments are surface-level. A 2025 survey from Cisco found that 65% of leaders do not know when or where to apply AI, and 52% lack foundational understanding of how the technology works. Generic assessments do not fix that. They confirm the confusion without creating a path forward.

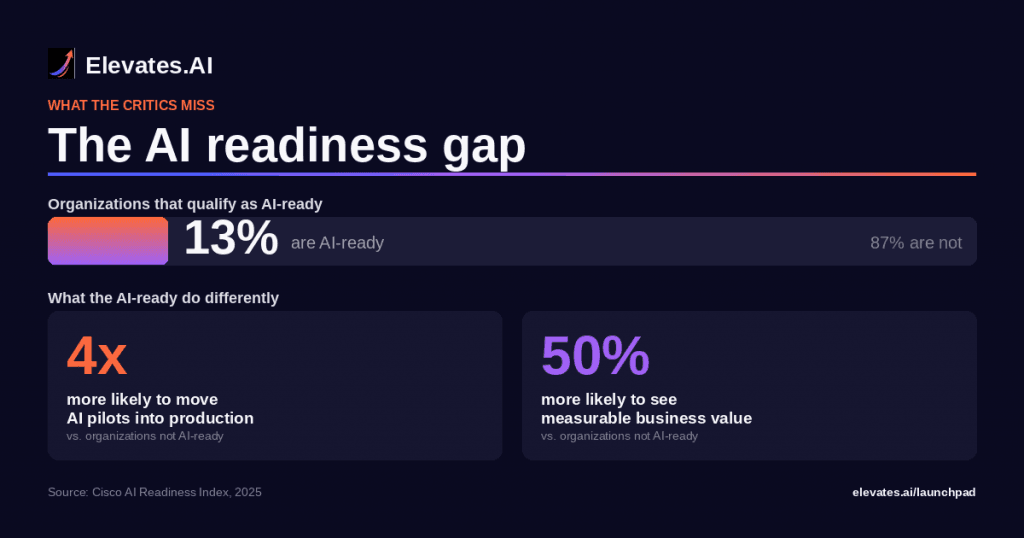

But the data tells a different story when assessments are done right. Cisco’s AI Readiness Index (2025) found that only 13% of organizations globally qualify as AI-ready. The organizations that do? They are 4x more likely to move AI pilots into production and 50% more likely to see measurable business value.

That gap between the 13% and everyone else is not about budget or access. It is about knowing where the gaps are before committing resources. That is exactly what a well-built AI readiness assessment delivers.

Why the AI Readiness Assessment Debate Misses the Point

The debate around AI readiness assessments usually centers on whether you need one before starting. That framing misses the real issue. The problem is not the assessment itself. The problem is what most assessments measure.

McKinsey’s 2025 State of AI report found that 88% of organizations use AI in at least one function, but only 39% see measurable enterprise-level impact. ISG reported that just 31% of AI use cases reach full production, and only 25% achieve projected ROI. Those numbers represent billions in misallocated investment.

Most assessments focus on infrastructure and data. They ask whether your cloud architecture can support AI workloads. They check for data lakes and API readiness. Those are necessary, but they are table stakes. The organizations stuck in the 88%-with-no-impact group already have the infrastructure. What they are missing is the alignment layer: the intersection of capability, process readiness, and strategic sequencing.

What a Real AI Readiness Assessment Measures

An effective AI readiness assessment goes beyond technology inventory. It evaluates five areas that generic tools routinely miss.

Process readiness. Which workflows can absorb AI today without restructuring? Where are the manual bottlenecks that AI can address in 30 days versus 12 months? Most assessments skip this entirely and default to department-level recommendations that never translate to action.

Workforce alignment. Do the people running daily operations understand what AI will change about their work? 52% of organizations lack AI talent and skills, according to Cisco’s 2025 index. But the gap is not just about hiring data scientists. It is about preparing existing teams for the shift.

Strategic sequencing. Which AI investments should come first, second, third? Without sequencing, organizations end up with tool sprawl and competing priorities. The 60% of companies reporting little to no benefit from AI investment often made their biggest bets without a sequencing framework.

Governance fit. 91% of organizations need better AI governance and transparency (Cisco, 2025). A readiness assessment that does not evaluate your governance gaps is incomplete by definition.

Measurement infrastructure. If you cannot measure baseline performance before AI deployment, you cannot measure impact after. Most assessments do not check whether organizations have the metrics in place to know if AI is working.

The Cost of Skipping the AI Readiness Assessment

Gartner has reported that 85% of AI projects fail to deliver expected value. Industry benchmarks put AI pilot failure rates between 70% and 85%. Those failures are not random. They follow a pattern: organizations that skip or rush the assessment phase are significantly more likely to experience project failure.

The cost is not just the failed pilot. It is the opportunity cost of the pilots you did not pursue because resources went to the wrong use case first. It is the cultural cost when teams experience AI failure and become resistant to the next initiative.

A structured AI readiness assessment takes 60 seconds to start at Elevates.AI. The alternative, deploying AI based on vendor demos and executive enthusiasm, takes months and six figures to fail.

How to Tell If Your AI Readiness Assessment Is the Right One

Not all assessments are created equal. If yours only returns a score and a maturity tier, it is not enough. The right assessment produces three things.

A gap map, not just a score. You need specific, actionable gaps organized by priority. A score of 3.2 out of 5 means nothing without knowing which of the gaps, if closed, would produce the most immediate value.

A 90-day action plan. The assessment should connect directly to implementation. If the output is a report that sits in a shared drive, the critics are right, it was a waste of time. The output should be a sequenced roadmap with concrete first steps.

Integration with real tools. The assessment should connect to a curated marketplace of tools matched to your specific gaps. Generic tool lists do not help. Contextual recommendations based on your assessed needs do.

Frequently Asked Questions

What is an AI readiness assessment?

An AI readiness assessment is a structured evaluation that measures an organization’s preparedness to adopt, deploy, and scale AI across operations. It typically examines data quality, infrastructure, workforce skills, governance maturity, and strategic alignment to identify gaps before committing resources.

Are AI readiness assessments worth the investment?

Yes, when they produce actionable outputs. Organizations that complete a thorough AI readiness assessment before implementation are significantly more likely to reach production and see measurable ROI, according to data from Cisco and ISG. The investment is small relative to the cost of a misaligned AI deployment.

How long does an AI readiness assessment take?

It depends on the assessment. Enterprise consulting engagements can take weeks. Elevates.AI offers a 60-second starting assessment that generates a gap analysis and 90-day roadmap, making it possible to get actionable results the same day.

What should an AI readiness assessment measure beyond technology?

The most effective assessments measure process readiness, workforce alignment, strategic sequencing, governance fit, and measurement infrastructure. Technology alone does not predict AI success. Organizational and operational readiness are equally important.

How is an AI readiness assessment different from an AI maturity model?

An AI maturity model describes stages of organizational development, typically on a scale from initial to optimized. An AI readiness assessment is diagnostic and action-oriented. It identifies specific gaps and produces a prioritized plan to address them, rather than simply labeling a maturity stage.

Start With What You Actually Need to Know

If your AI investments are not producing the results you expected, the problem is probably not the technology. It is the gap between where your organization is and where it needs to be. Take the 60-second Elevates.AI assessment and get a gap analysis with a 90-day roadmap built for your specific situation.

What is an AI readiness assessment?

An AI readiness assessment is a structured evaluation that measures an organization’s preparedness to adopt, deploy, and scale AI across operations. It typically examines data quality, infrastructure, workforce skills, governance maturity, and strategic alignment to identify gaps before committing resources.

Are AI readiness assessments worth the investment?

Yes, when they produce actionable outputs. Organizations that complete a thorough AI readiness assessment before implementation are significantly more likely to reach production and see measurable ROI, according to data from Cisco and ISG. The investment is small relative to the cost of a misaligned AI deployment.

How long does an AI readiness assessment take?

It depends on the assessment. Enterprise consulting engagements can take weeks. Elevates.AI offers a 60-second starting assessment that generates a gap analysis and 90-day roadmap, making it possible to get actionable results the same day.

What should an AI readiness assessment measure beyond technology?

The most effective assessments measure process readiness, workforce alignment, strategic sequencing, governance fit, and measurement infrastructure. Technology alone does not predict AI success. Organizational and operational readiness are equally important.

How is an AI readiness assessment different from an AI maturity model?

An AI maturity model describes stages of organizational development, typically on a scale from initial to optimized. An AI readiness assessment is diagnostic and action-oriented. It identifies specific gaps and produces a prioritized plan to address them, rather than simply labeling a maturity stage.