I was reading a piece from IT Brew last week in which they tested three AI readiness assessment tools back-to-back. One of them had five questions. Five. The result was a stage label and a prompt to book a workshop.

That is not an assessment. That is a lead capture form with a maturity label stapled to it.

The question IT Brew raised is worth taking seriously: are AI readiness assessments actually valuable? The answer depends entirely on what the tool is measuring. Most of them measure comfort with AI. Very few measure operational readiness for it. That gap is where organizations waste six- and seven-figure sums on pilots that never reach production.

Three types of AI readiness assessment, and only one that matters

Alan Brown, a professor at the University of Exeter, told IT Brew there are three categories of AI readiness tools. The first is awareness tools. These ask high-level questions about your comfort with AI, your interest in adoption, and your general strategic direction. They produce a label. You are “exploring,” “planning,” or “scaling.” The output is a snapshot, not a plan.

The second category is diagnostic tools. These go deeper into specific capabilities. They might evaluate your data infrastructure, your governance maturity, and your talent bench. The output gives you more signal, but often without prioritization.

The third category is benchmarking tools. These score you against a framework, compare you to industry peers, and produce actionable recommendations tied to your specific gaps. The output is a roadmap, not a report card.

Most enterprise AI readiness assessments live in category one. They were built to generate leads for consulting engagements, not to provide clarity for the organization taking them on. That is the structural problem.

What a shallow AI readiness assessment actually misses

When an assessment asks five high-level questions, it cannot evaluate what actually determines whether an AI initiative succeeds or fails. It cannot assess data accessibility. Most enterprises have data, but it is trapped in PDFs, spreadsheets, and legacy CRMs that AI models cannot directly read. KPMG found that data quality, accessibility, and governance are the number one blockers to AI success. A five-question tool does not surface that.

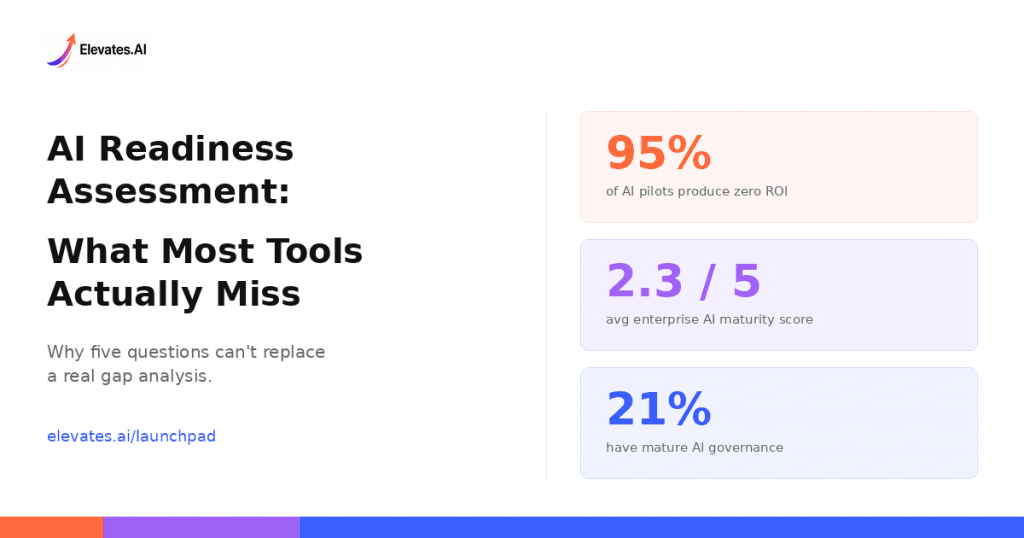

It cannot assess governance maturity. Deloitte’s 2026 State of AI in the Enterprise report surveyed 3,235 leaders across 24 countries and found that 85% of companies expect to deploy AI agents, but only 21% have a mature governance model for them. A shallow assessment does not distinguish between an organization that has governance on a whiteboard and one that has it in production.

It cannot assess sequencing readiness. McKinsey’s 2026 AI Trust Maturity Survey found that the average enterprise AI maturity score is 2.3 out of 5. Only 1% of enterprises consider their AI strategy mature. The question is not whether an organization is “ready” in some abstract sense. The question is what should come first, second, and third, given their specific constraints. That is a sequencing problem, and no five-question survey can solve it.

It cannot assess the risk of talent concentration. If two engineers understand the AI system and they leave, the organization inherits fragility. This is so common in enterprise AI that it has become a structural risk category. Assessment tools that do not ask about knowledge distribution are missing one of the most predictable failure modes.

What a real AI readiness assessment should evaluate

A credible AI readiness assessment evaluates at least five dimensions and does so with sufficient depth to produce a prioritized action plan, not just a maturity label.

Data foundations. Is your data accessible, clean, and governed? Can your systems feed an AI model without first doing six months of ETL work? Is your data architecture documented, or does it exist in two people’s heads?

Infrastructure readiness. Can your current systems support inference workloads? Are you running on-premises hardware that cannot scale, or have you already migrated to cloud infrastructure that can handle model deployment? Many organizations underestimate the lift of cloud migration when they begin AI projects.

Governance maturity. Do you have policies for AI model monitoring, bias detection, data privacy compliance, and decision accountability? Or are these conversations that have not happened yet? The EU AI Act and emerging regulations across North America are making governance a legal requirement, not a nice-to-have.

Talent and culture. Do you have the people to build, deploy, and maintain AI systems? Is AI literacy distributed across the organization, or concentrated in a small team? 52% of organizations say they lack the talent to execute AI initiatives. An assessment that does not surface this is incomplete.

Strategic alignment. Is your AI investment tied to measurable business outcomes, or is it driven by competitive anxiety? BCG found that only 4% of companies have achieved enterprise-wide AI deployment. The other 96% are stuck in pilots or planning. The difference is almost always strategic alignment, not technical capability.

The real test of an AI readiness assessment

Here is a simple way to evaluate any AI readiness assessment tool: Does the output tell you what to do next? Not in a generic sense. Not “improve your data governance.” Specifically. Which initiative should come first, given your constraints? What are the dependencies? What you should not do yet, and why.

An MIT study found that 95% of generative AI pilots produced zero ROI. That statistic should be disqualifying for any approach that skips the readiness step. The organizations that avoided that outcome were not the ones that adopted AI first. They were the ones who assessed their constraints honestly before they started.

IT Brew’s testing found that the most useful assessment in their comparison was the one that produced 49 specific recommendations tied to the organization’s actual operational gaps. Not a stage label. Not a workshop invitation. A prioritized set of actions the team could begin executing immediately.

That is the standard every AI readiness assessment should meet.

How the Elevates.AI readiness assessment works differently

The Elevates.AI Launchpad assessment was built to solve the problems described above. It takes 60 seconds to complete, but the output is not a maturity label. It is a gap analysis across the dimensions that actually determine AI success, followed by a 90-day implementation roadmap prioritized by your specific constraints.

The assessment identifies where your organization sits on the readiness curve, which gaps are blocking progress, what should come first based on dependencies and business priorities, and which tools in our curated marketplace match your assessed needs. The goal is not to tell you whether you are ready. The goal is to tell you what to do next.

What is an AI readiness assessment?

An AI readiness assessment is a structured evaluation of an organization’s preparedness to adopt and implement AI. It measures capabilities across strategy, data, infrastructure, talent, and governance to identify gaps and priorities. The best assessments produce actionable roadmaps, not just maturity scores.

How long should an AI readiness assessment take?

Assessment length varies widely. Some tools take five minutes and produce a stage label. Others require weeks of consulting engagement. The Elevates.AI Launchpad assessment takes 60 seconds and generates a gap analysis with a 90-day implementation roadmap, balancing speed with depth.

Are free AI readiness assessments worth taking?

It depends on the output. A free assessment that produces a generic label like “planning stage” has limited value. A free assessment that identifies specific gaps and recommends prioritized actions is highly valuable. Evaluate any tool by asking: does the output tell me what to do next?

What is the difference between AI readiness and AI maturity?

AI readiness measures whether an organization can successfully start or scale AI initiatives right now. AI maturity measures where an organization sits on a progression from early exploration to enterprise-wide integration. Readiness is forward-looking and action-oriented. Maturity is a benchmark. Both are useful, but readiness drives decisions.

Why do most AI pilots fail to reach production?

Most AI pilots fail because organizations skip the readiness step. They deploy tools before assessing data quality, governance maturity, or talent capacity. An MIT study found that 95% of generative AI pilots produced zero ROI. The organizations that succeed are the ones that assess constraints before committing resources.

If your AI investments are not producing what you expected, the problem most likely started before your first deployment. Take the 60-Second AI Readiness Assessment and find out what is actually blocking your progress